The independent variables can either be continuous or qualitative, however the dependent variable must be measured on a continuous scale. Multiple linear regression aims to find a linear relationship between variables in situations where there are several independent variables. Contents Toggle Main Menu 1 Definition 2 Bivariate Model 3 Minimise the sum of square residuals 4 Interpreting a Multiple Regression Model 5 Video Examples 6 Test Yourself 7 External Resources 8 See Also Definition Partial correlation r 12.34 equal to uncontrolled correlation r 12 No effect of control variables Partial correlation near 0 Original correlation is spurious. For example r 12.34 is the correlation of variables 1 and 2, controlling for variables 3 and 4. Partial correlation is the correlation of two variables while controlling for a third or more other variables.Determinants near zero indicate that some or all independent variables are highly correlated. While simple correlations tell something about multicollinearity, the preferred method of assessing multicollinearity is to compute the determinant of the correlation matrix.

The values of r 2's near 1 violate the assumption of no perfect collinearity, while high r 2's increase the standard error of the regression coefficients and make assessment of the unique role of each independent variable difficult or impossible. Multicollinearity is the intercorrelation of the independent variables.In the case of a large number of independent variables, adjusted R 2 may be noticeably lower. In the case of a few independent variables, R 2 and adjusted R 2 will be close. It is therefore essential to adjust the value of R 2 as the number of independent variables increases. Adjusted R-Square: When there are a large number of independent variables, it is possible that R 2 may become artificially large, simply because some independent variables' chance variations "explain" small parts of the variance of the dependent variable.SST = total sum of squares = ( Yi - Mean Y) 2.SSE = error sum of squares = ( Yi - Est Yi) 2 where Yi is the actual value of Y for the i th case and Est Yi is the regression prediction for the i th case.It is also called the coefficient of multiple determination.

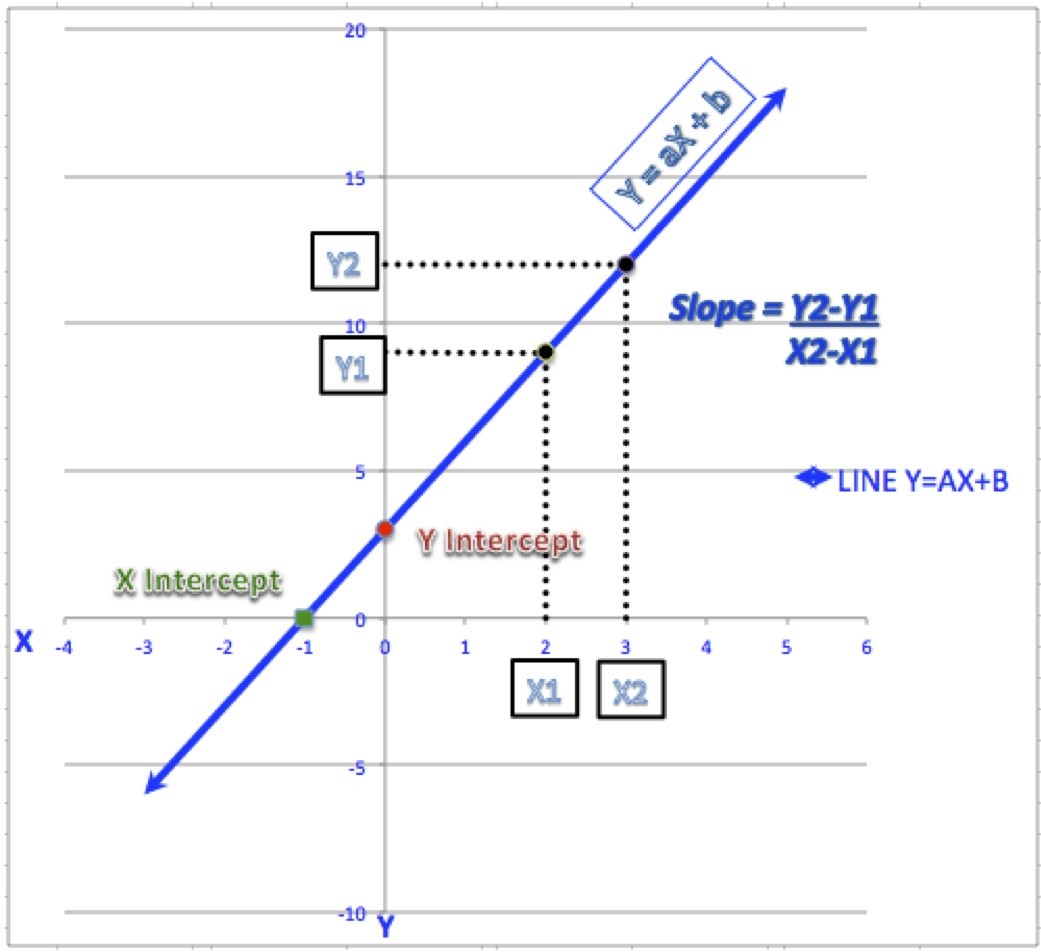

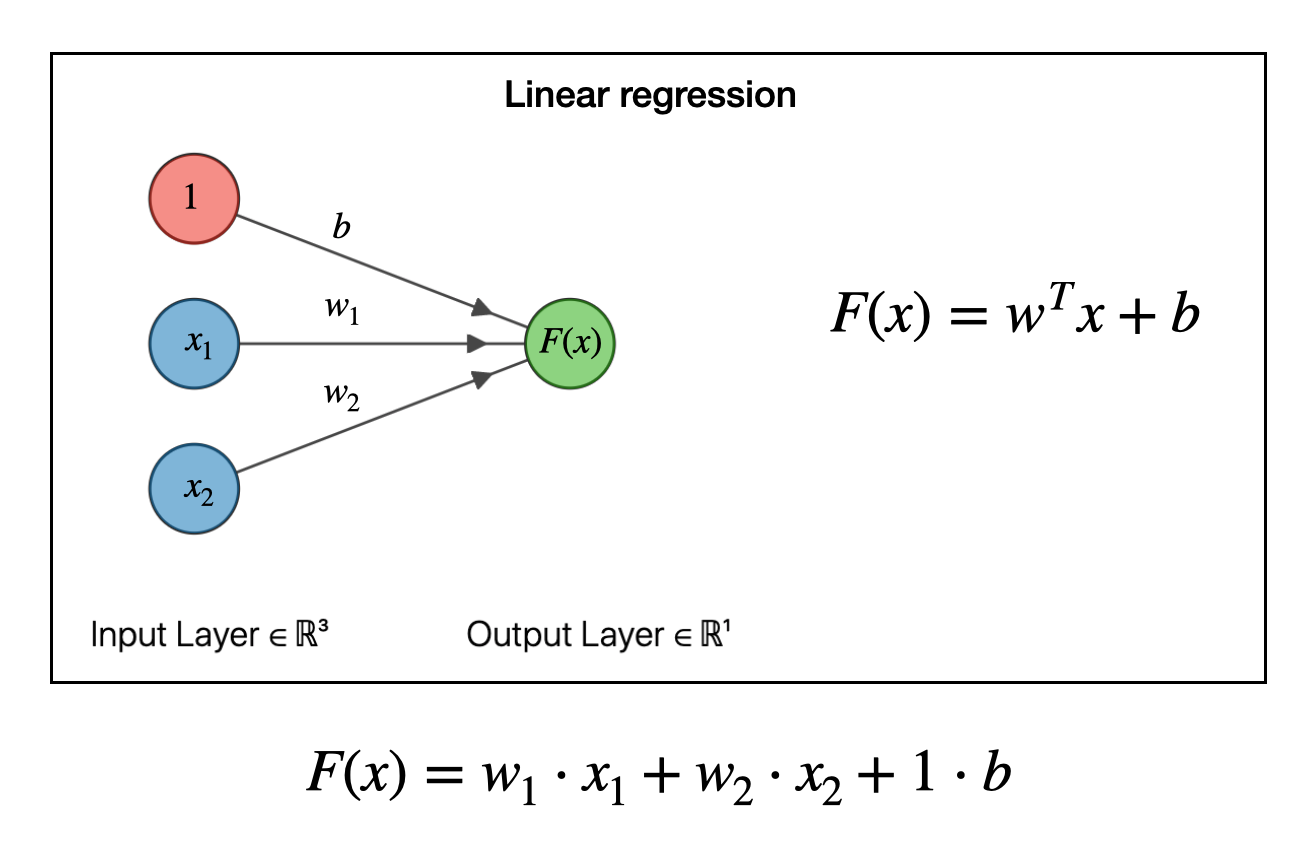

Beta weights are the regression coefficients for standardized data.Each regression coefficient represents the net effect the i th variable has on the dependent variable, holding the remaining x's in the equation constant. Regression coefficient: Regression coefficients bi are the slopes of the regression plane in the direction of x i.It is equal to the estimated Y value when all the independents have a value of 0. Intercept: The intercept, b 0, is where the regression plane intersects with the Y-axis.Ordinary least squares: This method derives its name from the criterion used to draw the best-fit regression line: a line such that the sum of the squared deviations of the distances of all the points to the line is minimized.The parameters of the regression equation are estimated using the ordinary least squares method ( OLS). Where Y is the dependent variable, the b's are the regression coefficients for the corresponding x (independent) terms, b 0 is a constant or intercept, and e is the error term reflected in the residuals. In multiple regression analysis, the relationship between one dependent variable and several independent variables (called predictors) is analyzed.